I’ve reviewed the movie review sites and got the top two for you–Metacritic and MovieReviewIntelligence. Metacritic also serves up excellent TV, music & gaming guidance.

I’ve used Metacritic and Rotten Tomatoes for years, and the newer kid on the block, MovieReviewIntelligence, since it came into being about two years ago. Now, movie review aggregators all perform the same basic trick–they scarf up big bunches of opinions, sift and distill who’s saying what, and spit out their magic rating. Ta-daah!

But how they go about the devilish details of which critics they deem worthy of listening to, and how to stack up those critics, can be significantly different and garner different results.

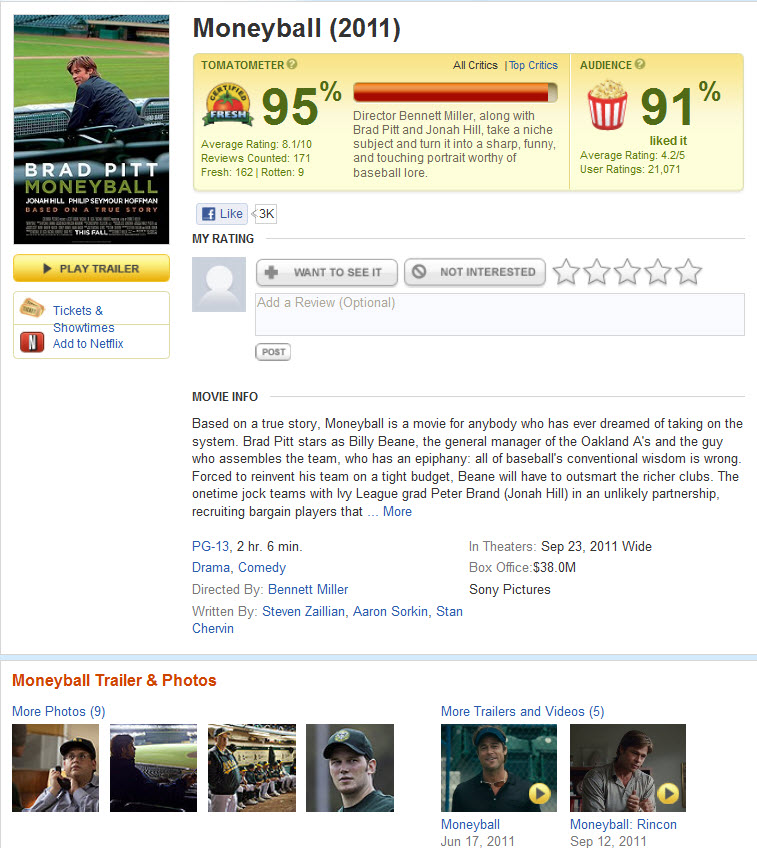

The most well known and popular review aggregator, RottenTomatoes.com, has over 9 million visitors a month cramming into their produce aisle. They have a cute Tomatometer ranking percentage score with a “fresh” tomato for good reviews and “rotten” for not so good.

Squeezing the Tomatoe and looking at the details of how they do what they do, will point out why I find the site my least favorite and accurate, and why I don’t use it anymore. While the other two sites use the reviews from professional critics to come up with their scores, Rotten Tomatoes also includes a bunch of citizen-reviewers who write on obscure websites, like Georgia’s self-proclaimed “entertainment man” Jackie K. Cooper. Huh, who?!

Squeezing the Tomatoe and looking at the details of how they do what they do, will point out why I find the site my least favorite and accurate, and why I don’t use it anymore. While the other two sites use the reviews from professional critics to come up with their scores, Rotten Tomatoes also includes a bunch of citizen-reviewers who write on obscure websites, like Georgia’s self-proclaimed “entertainment man” Jackie K. Cooper. Huh, who?!

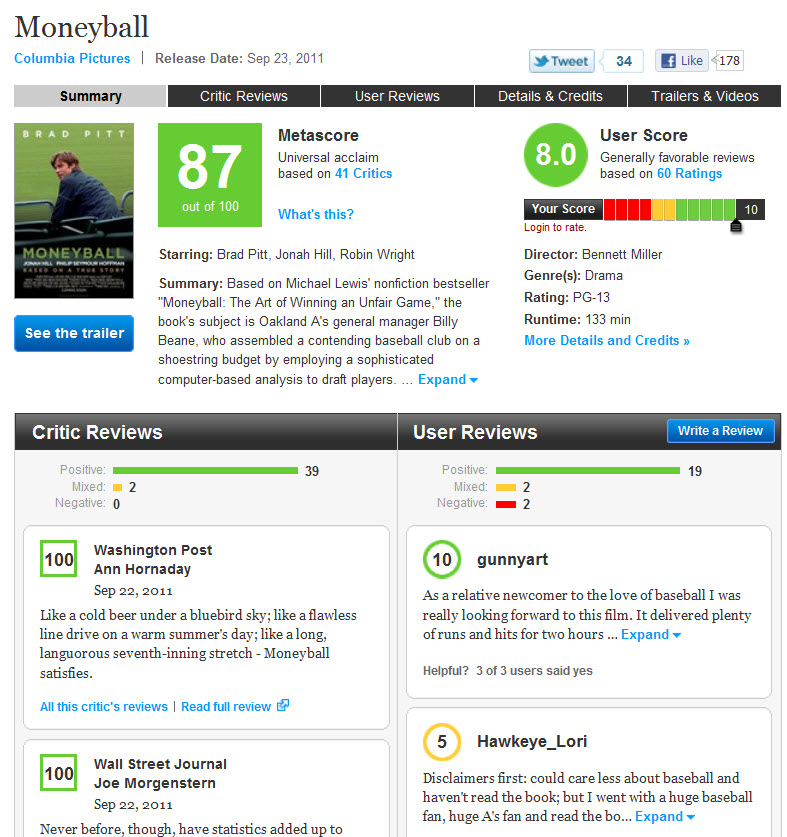

The best movie and TV review website is Metacritic

David Gross, a former market research and 20th Century Fox studio executive who created Movie Review Intelligence, points out that because Rotten Tomatoes gives equal weighting to Time magazine and tiny websites, it penalizes circulation. “What’s going on hurts critics, it hurts moviegoers and it hurts the industry,” Gross says. “What difference does it make if some fan boy says thumbs down’?”

MovieReviewIntelligence screenshot

Keep in mind how Rotten Tomatoes gives it coveted “fresh” rating” to movies that any number, and hypothetically all, of its counted reviewers don’t really love. It’s scores are based on the ratio of favorable to unfavorable reviews. If a film snags 20 positive reviews and 20 negative reviews, it’s 50% fresh, and if the ratio is 15 good to five bad, it’s 75%. But if all 20 of those critics give the equivalent of a B-minus letter grade, it’s 100% fresh, because all of the reviews were positive, even if only barely so.

Rotten Tomatoes screenshot

Metacritic and Movie Review Intelligence try to come up with an average score that reflects how much critics actually like a movie, rather than a ratio of raves to pans. If a movie on those two sites gets a 50% score, it means the consensus of all of the reviews it read was 50% positive. The average review, we could say, got two out of four stars.

All three sites give every review they read a numerical score and that can be a tricky trick to pull off since many reviewers don’t give letter grades or stars. So it gets to be a subjective appraisal and mulling over process to come up with the grading. The assigned grades are translated into numerical scores– a B-plus is an 83, a C-minus rates a 42, and so on. Rather than simply average those scores, Metacritic and MRI apply a weighting system based on a reviewer’s circulation.

Meteoritic, instead of translating a review into a letter grade, has its staff score notices on a 0-100 scale in 10-point steps. “It’s still often hard to distinguish between what’s an 80 and what’s a 90,” says Marc Doyle, one of the founders of Metacritic.

While MRI weights reviews for audience size, Metacritic takes into account the prestige factor, a calculation it calls its “secret sauce” and one it won’t disclose. “Roger Ebert is weighted more than someone you’ve never heard of,” Doyle says. He also points out that when critics are consistently 75% favorable in their reviews of a movie, its Metacritic score is a 75. But that same movie could be 100% fresh on Rotten Tomatoes. “That’s a fundamental difference,” he says.

If you want very detailed explanations for how each one goes about its calculations, dig in … Metacritic – Movie Review Intelligence – Rotten Tomatoes

Besides all the calculations and different methodologies being use by each, it’ll come down to your own subjective thumbs up and down for these sites.

But as I stated in the beginning, Metacritic is my own #1 fave and the one I use most often now. Where the rubber meets the road–reading their scores and then comparing them with the actual experience of watching the movie or DVD–it usually matches up well. Metacritic also seems to perform the trick best when I go to the trouble of reading reviews by my own favorite critics in some major publications, and comparing all the prognostications together.

I also prefer Metacritic for the clear and concise way it presents its scores at their site. It uses a simple color code so your eye can quickly scan the results–red, yellow, green. And even the simple “sort by name” and “sort by score” feature is something I think you’ll find quite useful.

As I also stated at the top, you might find my #2 choice, Movie Review Intelligence, to be your cup of tea if you’re in show biz and want more detailed details, especially when it comes to analyzing box office performance. You’ll see in the screen capture how they dig down to regional, press type and media value stuff.

So that’s my humble view of the reviewing sites and I hope I’ve helped to sort out the puzzle of whats what. I wish you well out there in all your movie adventures.

written by Los Angeles photographer & writer Gregory Mancuso